Created by Istio founders, Tetrate Service Bridge (TSB) is the edge-to-workload application connectivity platform that provides enterprises with a consistent, unified way to connect and secure services across an entire mesh-managed environment.

In the first part of this article, we’ll explain why organizations need an application networking platform and the problems that TSB solves for enterprises. In the second section, we describe demos that zoom into two specific problem areas: (1) automatic failover at runtime, and (2) enabling end-to-end encryption, authorization, and authentication across cloud and data center boundaries. This content was originally presented by Zack Butcher and Adam Zwickey at Tetrate’s recent webinar, “Intro to Tetrate Service Bridge: Connecting and Securing Apps wherever they Run.”

TSB Overview

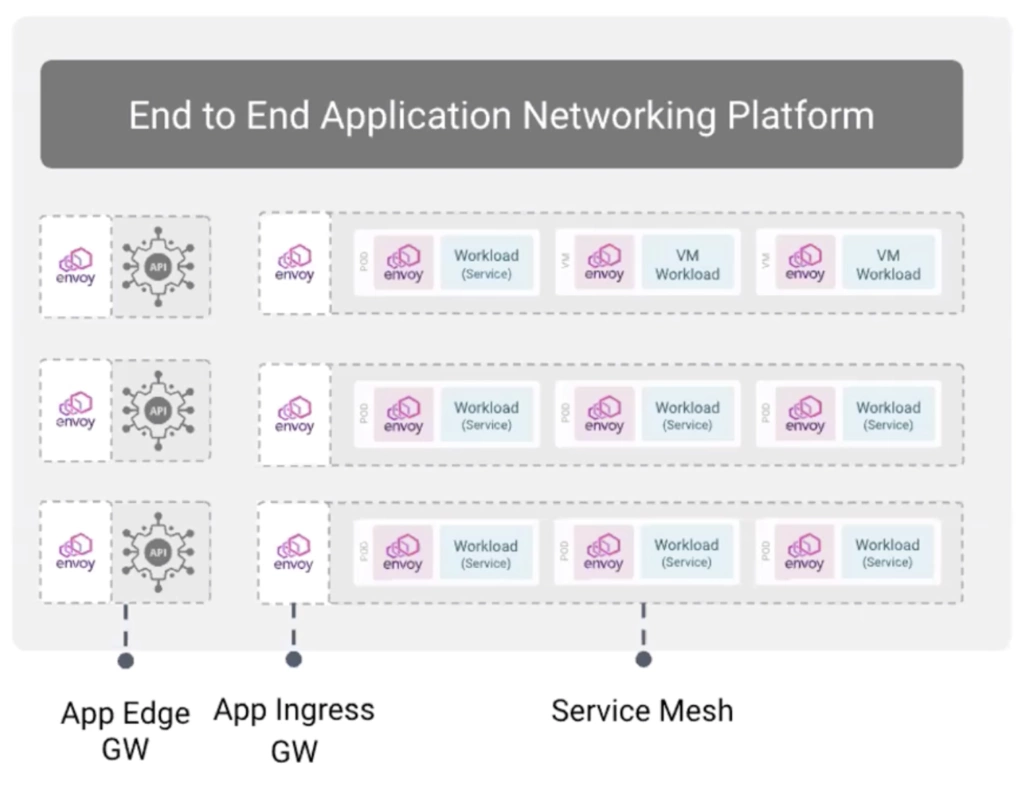

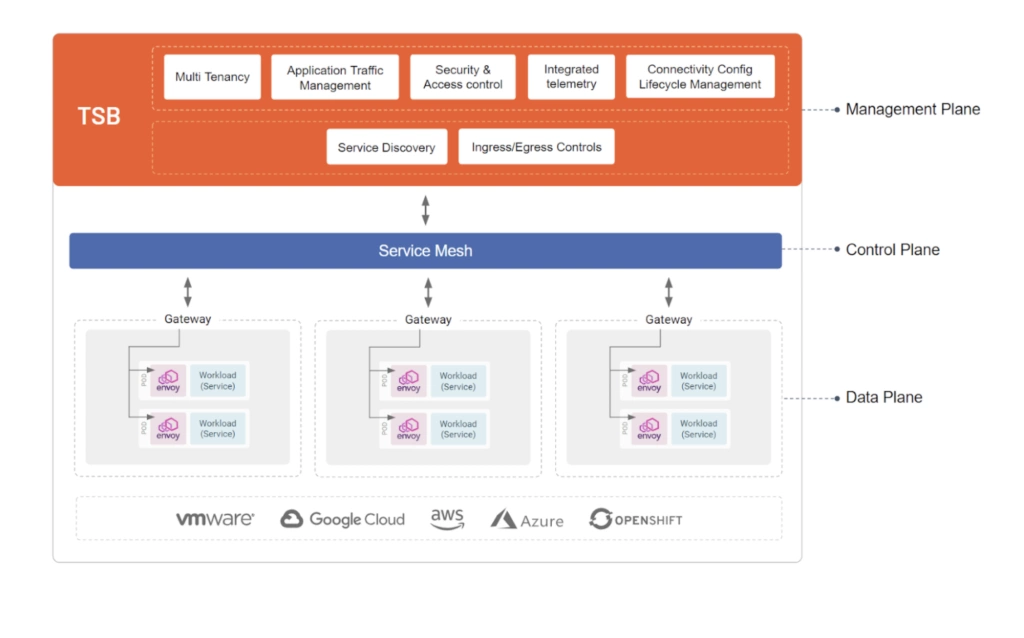

Today’s enterprises require a layer of infrastructure that allows applications to communicate– in any environment, on any compute. Tetrate Service Bridge (TSB) is an application networking platform that provides this layer: Built on top of the open source projects Istio, Envoy, and Apache SkyWalking, TSB is a management plane for a multi-cluster service mesh, or for multiple meshes. In simplest terms, its job is to harness the complexity of complex, distributed systems and make it simple for application developers and operators to achieve what they need to do in an organization.

With every industry undergoing digital transformation, enterprises today are modernizing and/or migrating to the cloud to achieve organizational agility. To ship faster, organizations are moving to an application stack built with microservices and cloud-native architecture, while traditional data centers now coexist with private and public clouds. By connecting traditional and modern workloads seamlessly, TSB makes it faster and safer to modernize and incrementally migrate, without fear of downtime– and to connect, secure, control, and observe their applications wherever they are running.

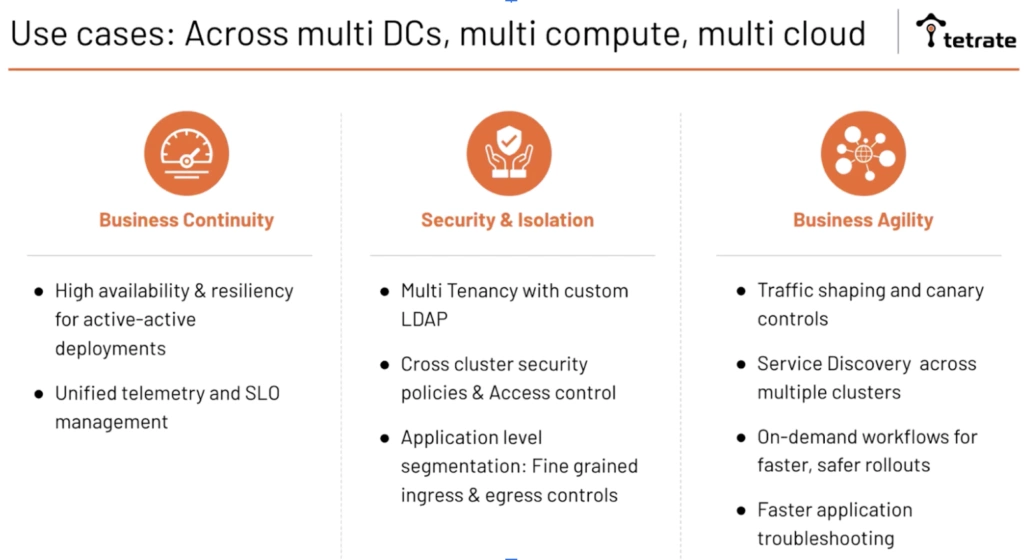

Operators can build highly available and resilient applications and achieve global visibility into how they’re doing it. Application developers can iterate faster and safer using mesh features like traffic shaping and canary deployments along with runtime features that TSB facilitates, like cross-cluster service discovery. And security team members can move toward a Zero Trust posture in the application itself– keeping cross-cluster communication secure with consistent identities, policies, and encryption in transit– meaning applications are secure by default.

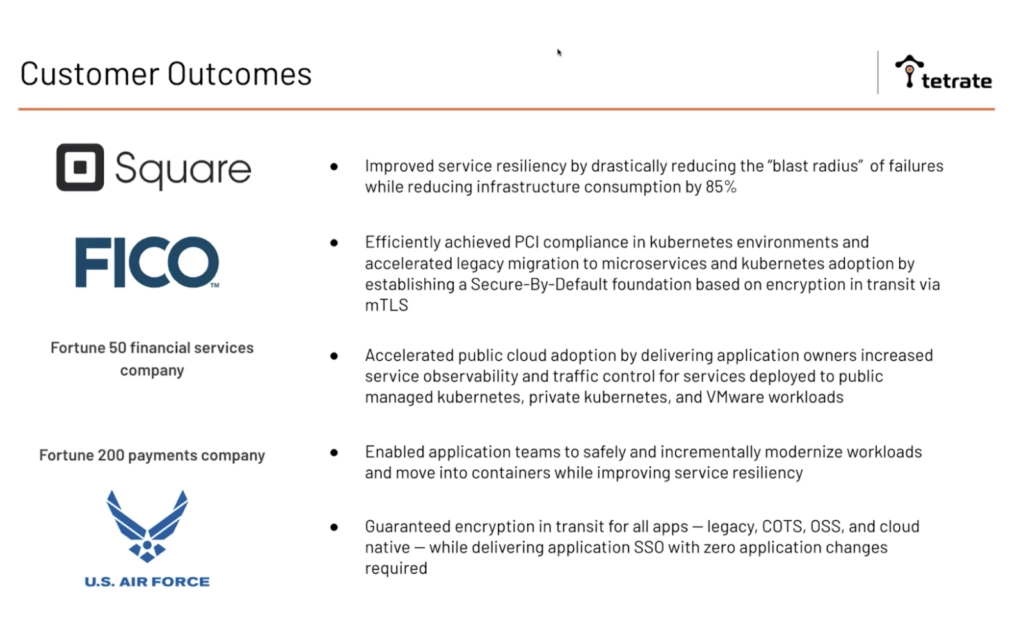

TSB is being used in production by Fortune 500 companies that have adopted it for a variety of use cases. FICO used it to achieve PCI compliance for their Kubernetes environments; Square increased resiliency and availability of several important systems and used service mesh features to reduce their infrastructure footprint; the U.S. Department of Defense adopted Istio for encryption in transit and to facilitate application-level security; TSB codifies their best-practices. With TSB, one of the top financial services companies in the country was able to accelerate both their migration into cloud as well as their migration away from legacy VMs. And a top payments company was able to start modernizing application workloads– improving resiliency and their overall security posture and reducing the time it took for their developers to actually deliver features.

With those use cases in mind, let’s talk about how Tetrate Service Bridge works. Again, TSB is a management plane for a multi-cluster service mesh. You’ll have Envoy in your compute clusters as the data planes, and those are being programmed by Istio Control planes. In addition to those open source components, we ship a control plane that sits in the cluster to facilitate giving configuration to Istio itself.

And this is important because you want to be able to specialize configuration to the local cluster that you’re in, based on your local context. And those are responsible for propagating cross-cluster service discovery information to facilitate runtime failover, as you can see in the demo below– we’ll see what happens when we kill one of our pods at runtime and watch things like cross cluster failover automatically.

But the real challenge for organizations adopting a mesh is really about how to glue these runtime pieces to my organization. TSB’s management plane bridges the gap between organizations and what the organization needs to do and achieve using the mesh. On top of the open source components, TSB provides a rich set of cross cluster capabilities:

- Multitenancy: Both in terms of who can configure what, as well as isolating the impact of changes that are made via TSB to the components at runtime.

- Traffic shaping: All the capabilities that Istio has in the context of one cluster is facilitated both in and across clusters, and an application gateway helps facilitate cross-cluster load balancing out of sight, facilitating transitions from legacy VM-based deployments into, for example, modern ECS or EC2 or EKS deployments.

- High availability and resiliency: All the good availability features of a mesh (e.g., retries, circuit breakers) but gain, coordinated across clusters, with the ability to do failover because of a circuit breaker across those clusters

- Cross-cluster security policies and access control: Consistent policies and fine-grained access control

- Unified telemetry and SLO management: Global, unified visibility

- Service discovery across clusters: A registry of services and runtime service discovery facilitated across clusters

- Fine-grained ingress and egress controls: Fine-grained controls around what can come in and out

- API gateway is part of the mesh: API gateways are an inherent part of the mesh: Not just at the application edge or the ingress gateway but all the way down to the middle sidecars in the mesh. TSB enables a lot of the functionality you might think of as “API-oriented” — things like rate limiting, authorization/authentication, token validation, etc.

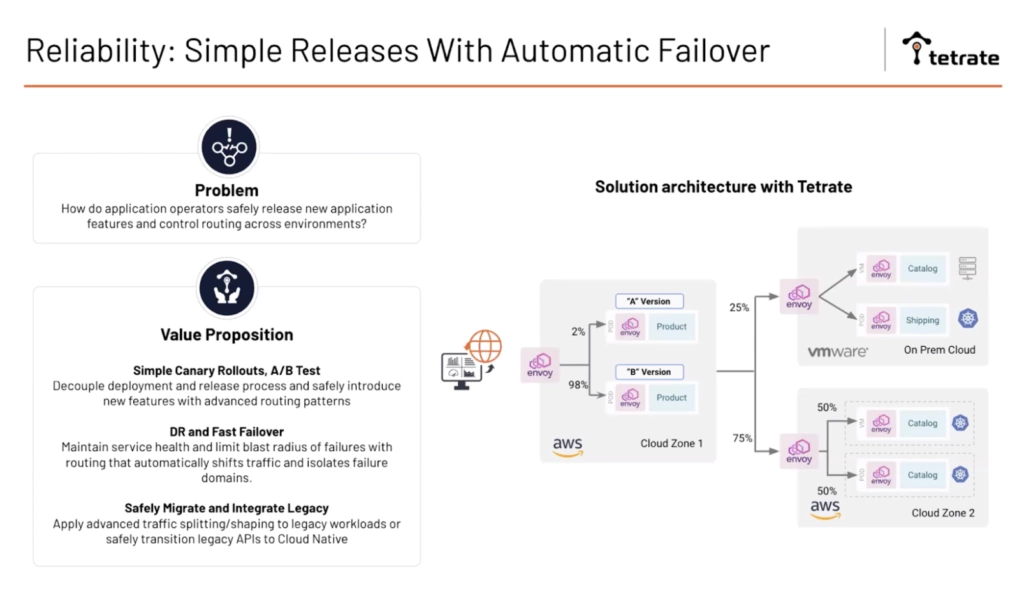

Demo Part 1: Simple releases with automatic failover at runtime

How do application operators safely release new application features and control routing across environments? With a heterogeneous infrastructure and application teams moving rapidly, we need tools to facilitate playing safely– things like canary and A/B testing, but also runtime features like automatic failover, as well as being able to shift traffic at a fine-grained layer across, for example, VMs and Kubernetes to facilitate strangling the monolith and similar patterns.

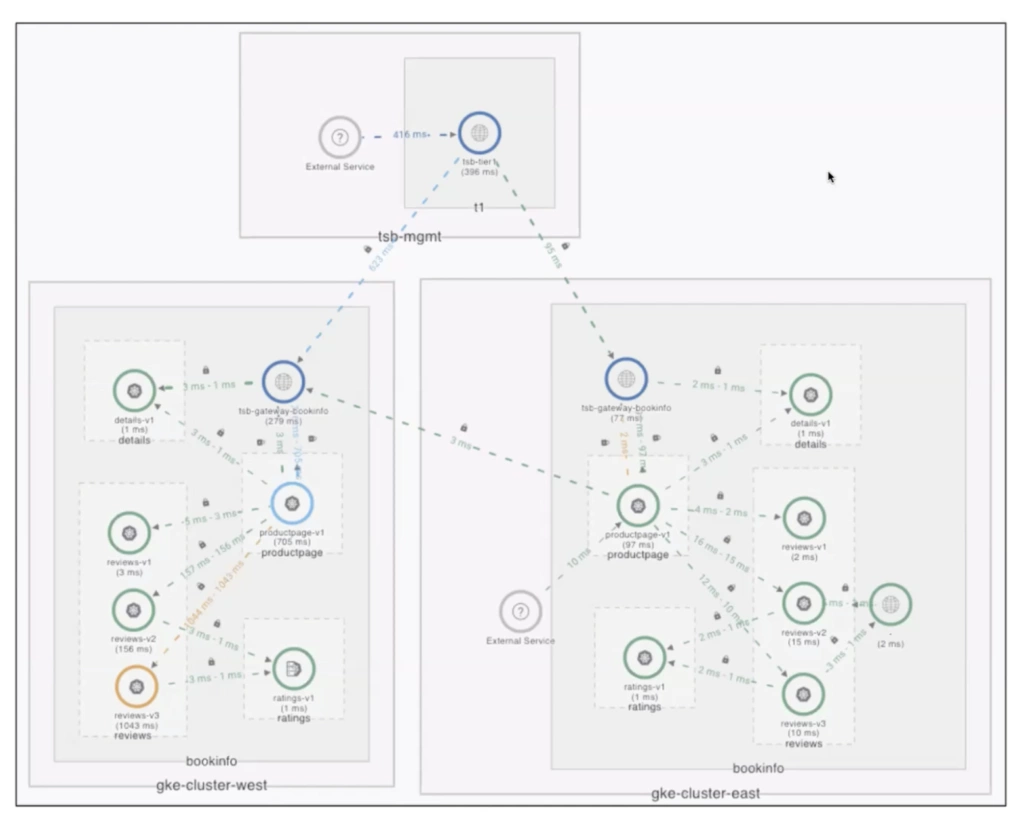

In the demo, Adam Zwickey uses the bookinfo application (the Istio sample application composed of four microservices) to demonstrate an active/active deployment across two regions (GKE clusters east and west) where the global mesh can perform locality-aware routing– keeping traffic local without transgressing regional or cluster boundaries. Adam simulates a failover so we can watch the automatic and fast failover between regions, and then shows how to introduce a legacy (VM) workload into the application.

Locality-aware routing & failover simulation

We can note some of the key logical constructs in TSB: organization (e.g., a corporation with shared infrastructure), tenant (e.g., a business unit that shares common access to resources with specific privileges), and workspace (a partitioned zone where teams manage their exclusively-owned namespaces).

Adam shows two non-functioning, unconnected bookinfo applications. He applies a Yaml file containing a terse description of ingress and routing policy that gets applied to the service mesh and distributed by the management plane out to the clusters. We see right away that the services dashboard lights up with metrics indicating traffic (and the health of that traffic) flowing through these. The application edge gateway resolves the application and participates in a global mesh spread across three clusters. Looking at the service configuration back at the home page of the bookinfo app, you can see all of our service entries in the mesh, and you’ll notice the management lane delivered to the local cluster– GKE East. The traffic is kept local.

Adam then simulates a failure, showing what would happen if our detail service in the East rolled out some bad configuration, causing a temporary outage of our pods. To do this, he scales the deployment to zero (and when we look at our pods in the namespace we can see they’re not handling any more requests). Upon refresh, we notice a failover took place.

Looking at the topology, we see the connectivity in the East cluster that’s going over mTLS to our gateways in the other cluster. We seamlessly failed over– across regions– so that our application can continue to function and we can continue to meet our SLAs and SLOs. And in this demo, we can clearly see there is no user-perceived failure. We see a continuous string of 200s rolling by.

Observability

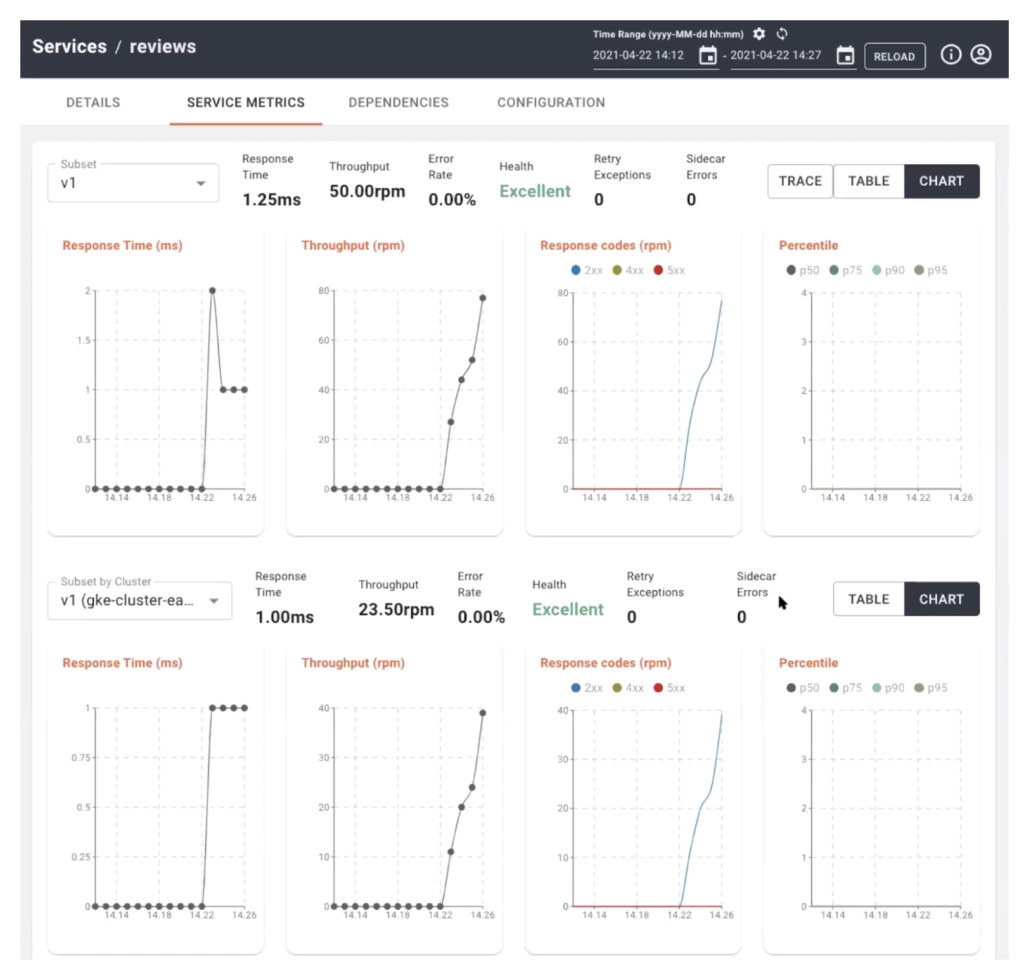

Looking at bookinfo’s three deployed versions, we can get an interesting view of the application and can very easily see a comparison of these subsets. For example, we can see that while the service is behaving pretty well overall, we notice a couple spikes here and response times that are higher than average. In version 3 we can see the response time is 729 milliseconds (vs. single digits) and we can further dive into that and look at all the clickable RED metrics snd see that we can hone in on an issue in GKE West, Version 3. If I select that cluster and view the actual instance that has the issue I can start to drill in and find what’s wrong. This is a pretty quick and simple way to get pretty granular and to see the most relevant metrics to the behavior of the application. TSB uses Apache SkyWalking for highly performant observability features.

VM onboarding

Next Adam turns back to the application to show how you can introduce a legacy workload sidecar into our mesh, just by applying some very simple Kubernetes Yaml, which are the Istio custom resources (CRs).

All Adam really did here was to apply the workload entry which is an Istio config that tells the mesh where the VM lives. At this point, nothing’s connected, and there’s no sidecar running. This is to show that TSB has a helper command in the Tetrate CLI that takes care of that bootstrapping for you. So you install the sidecar and introduce the VM to the mesh with a tctl command, and it takes just a few minutes to see it downloaded to the proxy and functioning as you expect with any other pod in the mesh. Upon refresh, we see our hybrid workloads in East and West, and in the topology view, we see the icon is half-VM, half-Kubernetes.

So to recap, we’ve seen how we can route traffic, connect and loadbalance, integrate legacy, and fail over seamlessly.

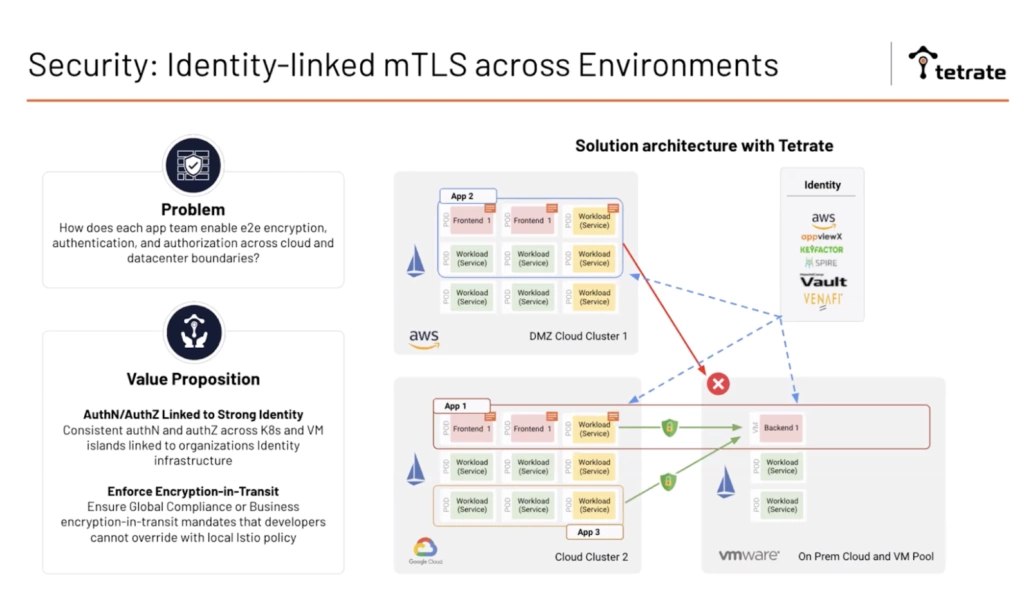

Demo Part 2: Applying a workload security policy

Security is a major use case for TSB, so we’ll want to look at how we facilitate end-to-end encryption, authentication and authorization, and common policy across not just our data centers, but also into our cloud footprints. As platform teams are having to grapple with keeping the system secure, and application teams need to use the best-in-breed of each of the cloud providers for whatever they’re shipping, how we consistently maintain our security posture becomes an incredibly tough challenge. Istio has a lot of phenomenal tools for helping with consistent identity and enforcing encryption in transit.

In the final segment of Adam’s demo, he shows some of the policy that we can apply for security, and how a user like a security architect can very easily set a default, baseline policy for all applications and services deployed to a global mesh or potentially a workspace within it. We can set traffic settings, ingress, circuit breaker and timeouts, or require strict authentication where all connections within the mesh need to have present client certificates. We could also author service-to-service authorization policy, which would look not only at client certificates, but also on the identity that’s encoded in that. This is really moving us toward a secure-by-default posture in which all services have some sort of strong authorization and authentication tied to them.

Conclusion

We’ve covered TSB’s basic use cases and features and how it’s been used by Fortune 5000 companies in production for PCI compliance, zero trust security, resiliency, and reliability. In the demo we’ve seen how within just a few minutes and really only a few lines of config or policy, we’re able to build a pretty powerful architecture, which has applications that are highly available, active/active across different clusters and regions. When possible, we optimized routing to keep it in the cluster, or have the least hops, to make it most efficient. But if we have things that go wrong, we’re able to fail across the clusters. We’ve introduced a legacy VM in a systematic and safe way, which we might potentially start to modernize. And lastly, we’ve seen how to establish a baseline security policy.

Tetrate Service Bridge 1.3 is now available. Explore it further or contact Tetrate for details.

Zack Butcher is a Tetrate engineer. He is an Istio contributor and member of its Steering Committee, and is the co-author of Istio Up and Running (O’Reilly: 2019).

Adam Zwickey is a solutions engineering leader at Tetrate. He worked previously as Field Principal for VMware’s Modern Application Platform business unit. His focus for nearly the past decade has been helping Global 2000 companies modernize their infrastructure platforms and adopt cloud native application architectures.

Tevah Platt is a content creator for Tetrate who has written about service mesh and the open source Istio, Envoy, and SkyWalking projects.