Securing microservices across clouds using Tetrate Service Bridge

Introduction Today, digital transformation is a well-established priority for almost all organizations across industries. With digital transformation,

Introduction

Today, digital transformation is a well-established priority for almost all organizations across industries. With digital transformation, which includes adopting cloud, microservices, and container technologies, C-Suite leaders intend to deliver services faster and on demand.

Cloud-native technologies, containerization, and microservice architecture empower IT to run scalable applications, but they also introduce a new set of security challenges. CISOs, CIOs, and platform architects face challenges in proactively ensuring the security of modern applications.

This blog will serve as an educational piece for CISO, architects, and DevSecOps teams looking to securely adopt cloud and container technology. We will share information about the most prominent security risks while adopting microservices and Kubernetes and ways to overcome them using service mesh technology.

Even a longer piece such as this one can only serve as an introduction to such a complex topic. If you would like to know more, contact us.

The current security posture of microservices and containerized systems

The IT security teams of organizations that have adopted a cloud-first strategy constantly monitor their environment to identify misconfiguration issues and compliance risks in the cloud and containers. The idea is to minimize security risks and to avoid data breaches in Infrastructure, platforms, and software. Let us understand the current situation of security in those organizations and the challenges faced by security managers.

Ensuring security for container infrastructure is tough

While developing web applications, platform architects often use Kubernetes containers for scalability and to support rapid deployment of software. Kubernetes was designed to be portable (usable on-premises and in any cloud) and customizable, and to handle workloads at scale. This means developers can quickly create applications that are easily deployable and that Operations can handle production traffic scaling up or down based on user requirements.

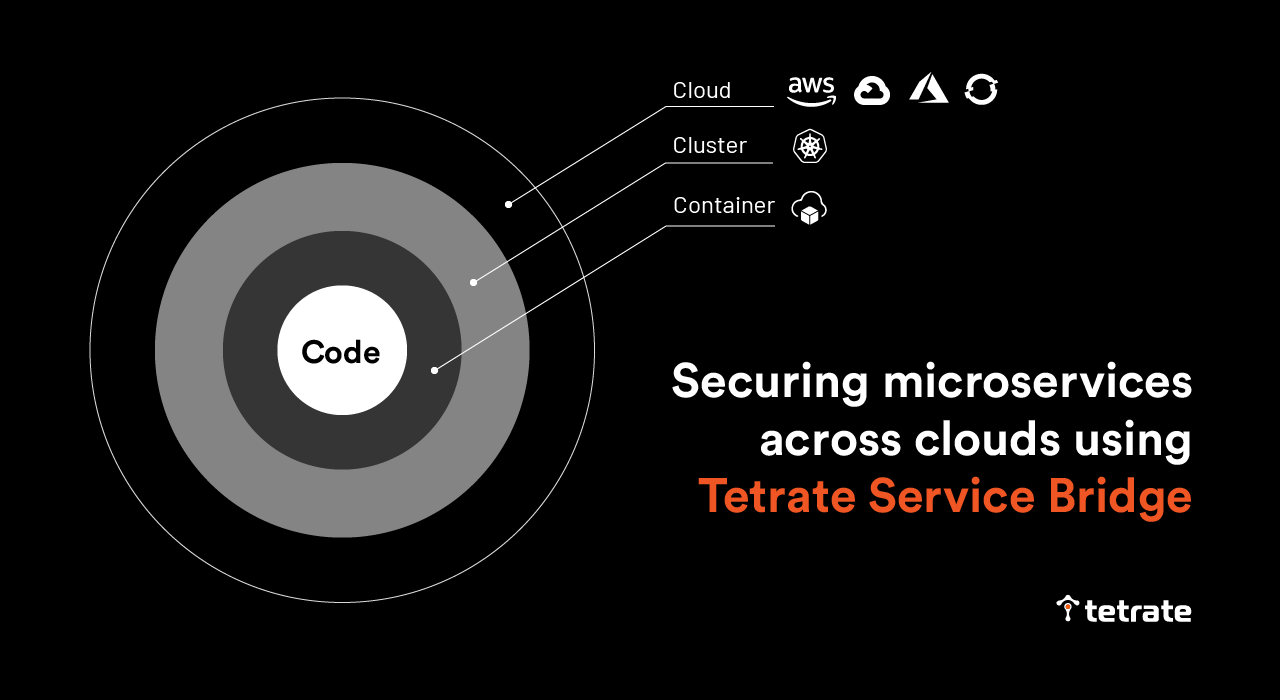

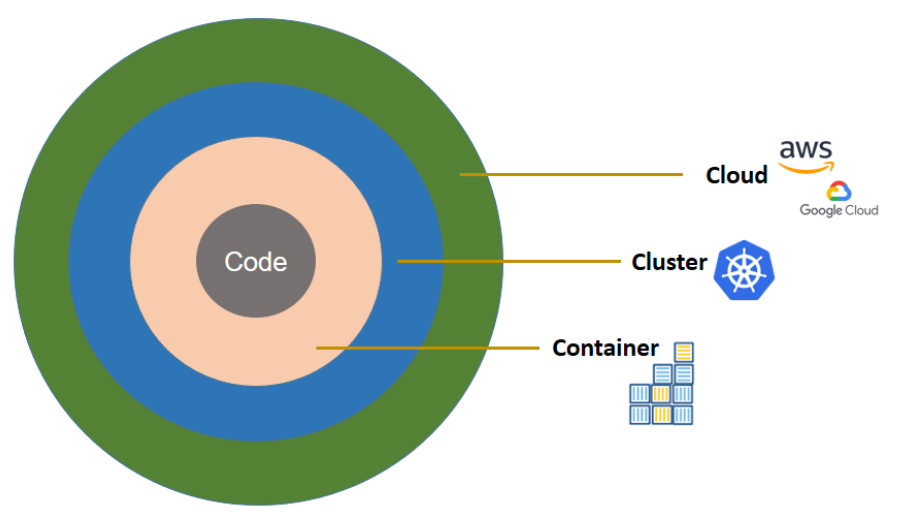

These flexible implementations create an overwhelming number of potential configurations to secure against potential attacks and security vulnerabilities in production systems. Out of the 4C’s of cloud native security described the Kubernetes project – Code, Container, Cluster, and Cloud – developers feel responsible for ensuring security standards only at the code level.

Figure A: 4C’s of cloud native security. (Source: https://kubernetes.io/)

In software delivery, developers ensure code security using frameworks and automated tools such as Static Application Security Testing (SAST) and Dynamic Application Security Testing (DAST). However, securing the infrastructure (container, clusters, and cloud ) involves a lot of observation and configuration (refer to Figure B). Developers experience toil through massive configuration and reconfiguration for security purposes, which is not their core work. And security people cannot directly ensure that the proper policies are actually in place and functioning effectively across the IT estate, leaving their organizations vulnerable.

Figure B: List of security configurations as per Kubernetes 4C’s cloud native security

With so many configuration issues and learning curves, it becomes challenging even for security managers to secure production systems running on Kubernetes clusters across on-premises and public cloud platforms.

Software is delivered faster with CI/CD pipeline, but security is left behind

Modern software delivery approaches do not take months to deploy code. With the release process automated with CI/CD pipelines and cloud orchestration tools, the DevOps team can deliver changes to production daily, or even multiple times a day. The rate of innovation has increased in many organizations with the advancement of cloud deployment technologies and use of GitOps methodology, but in many cases, focus on robust security and compliance has not kept up.

Some IT organizations are gradually integrating security in their DevOps journey to remove vulnerabilities in cloud native applications. This methodology of making security a part of the software delivery process is called DevSecOps. In this practice, the DevOps team, compliance managers, security managers, and network administrators collaborate to discuss security requirements and architect responses to threat models before deploying changes.

The necessary involvement of security in the software delivery process, though it improves the security posture, slows the pace of software delivery, which is not in the interest of the business.

Cloud and containerized workloads aren’t secured due to runtime vector attack

Though organizations are adopting cloud and Kubernetes technology faster than ever, security managers and architects find it complicated to make them fully secure. As per a Verizon report, security breaches in the cloud have surpassed breaches on-premises (and the bad guys may just be gettin started). Similarly, there are reports of frequent breaches and hacks of containerized applications as well. As stated in a 2021 report from RedHat, 90% of the respondents had experienced a security incident involving their container and Kubernetes environments over the previous year.

One of the common reasons for the failure of cloud native distributed system security is vector attacks on Kubernetes clusters at runtime, which bring new sets of security challenges. If a hacker breaches a single Kubernetes container, they can and will easily breach other containers in the same cluster. A statement from the US National Security Agency (NSA) on the reasons why hackers target Kubernetes to steal data and computation power supports the need for security in containerized applications.

Security managers try to avoid dependence on implicit trust between various services in Kubernetes clusters and use authentication and authorization methods to ensure secure network connectivity in containerized applications.

Handling authorization and access control policies is not a piece of cake

Architects often develop systems distributed across multiple clouds and on-premises data centers to avoid vendor lock-in, decrease service costs, improve failover options, and enhance disaster recovery.The decoupled components of these distributed applications perform their work in co-dependence with other services using API or gRPC calls.

A real-life production instance would be far more complex (refer to Figure C), often involving hundreds of microservices deployed into multiple clusters in multiple clouds, alongside monolithic applications running core business functions deployed in virtual machines or on bare metal services in on-premises data centers. Communication among all of these services happens through multiple endpoints.

Figure C: The services of a simple book reviews application are hosted on managed Kubernetes clusters across cloud and on-premises VMs ( sources: Istio.io)

Security managers formulate and mandate authentication and authorization (AuthN/Z) policies to secure these services and cross-service communications between hundreds of services. Secondly, security managers and architects want to implement strong role-based access control (RBAC), since internal employees can all too easily circumvent secured applications or services.

One of the most crucial concerns among security managers is- restricting employees to the least privileges necessary to perform their duties, ensuring access to any systems are set to “deny all” by default, and maintaining proper documentation detailing the roles and responsibilities of users. Furthermore, security managers also have to create and implement policies to ensure IT compliance with industry standards such as SOC2, HIPAA, PCI DSS, etc.

Unfortunately, security managers find it challenging to define and manage AuthN/Z policies for hundreds of users, applications, groups, devices, and APIs from one central location. They often lack real-time visibility into what’s happening in the environment.

How to ensure security in microservices using Tetrate Service Bridge

Many organizations have adopted service mesh as infrastructure for application development and delivery. Istio service mesh software, which includes Envoy as the data proxy of choice, is used to manage one or more Kubernetes containers on a per-cluster basis. Istio is the reference implementation for the US National Institute of Standards (NIST) standards for zero trust architecture.

Tetrate Service Bridge (TSB) enables security, agility, and observability for all edge-to-workload applications and APIs via one cloud-agnostic centralized platform. It provides built-in security and centralized visibility and governance across all environments for platform owners, yet empowers developers to make local decisions for their applications.

Istio works at the Kubernetes cluster level; TSB is used to manage multiple Istio instances across clusters, clouds, and on-premises. TSB also allows legacy applications running on virtual machines or bare-metal servers to be integrated into, and managed as part of, the service mesh.

TSB contributes to the use of Istio and Envoy (refer to Figure D) in an enterprise-grade service mesh by providing FIPS-certified Istio builds for your application and cloud platform, lifecycle management of Istio and Envoy, and other enhancements for increased usability.

Figure D: TSB management plane works on the top of Istio and Envoy

Tetrate Service Bridge (TSB) sits at the application edge. It controls request-level traffic across all your compute clusters and traffic shifting among multi-cloud, Kubernetes, and traditional compute clusters, and also provides north-south API gateway functionality.

In addition, TSB provides a global management plane to define security policies and configuration, fetch telemetry, and manage the lifecycle of Istio and Envoy across your entire network topology. With TSB, the security team can take security out of the application code stack and put it in the transparent network layer where it belongs- avoiding the need to take up developers bandwidth to modify code for security purposes.

With TSB, the DevOps team can deploy applications across on-premises and cloud infrastructure as needed to meet business requirements. At the same time, the security team can have central control of security policies for the microservices.

Abstracting security from the application through multi-tenancy hierarchy

TSB helps security managers and architects create a logical hierarchy of users (see Figure E) to interact with the physical infrastructure or resources such as clouds, containers, and virtual machines. By abstracting the whole infrastructure, security can be implemented and managed easily across the organization, without the need to implement configuration changes by hand across hundreds or thousands of Kubernetes clusters.

Figure E: Organizational hierarchy created by TSB for policy implementation and management

Security managers or DevSecOps leads can easily apply policies to resources under TSB management to all the resources, i.e., users, teams, and infrastructure within the organization. Configuring applications and services becomes much safer and easier. TSB provides clear visibility of the resources, owners, shared owners, and users to help manage resources safely.

TSB uses tenancy as a supporting element to create boundaries for user access to resources and their configurations. Security managers can create tenants representing a group of individuals (or users) and allocate them resources. For instance, an application developer working in a UI module can be assigned to a tenant called UI in TSB. And the UI tenants can have access to resources governed by specific security policies appropriate to their needs. For instance, containers used by the UI team can be protected from access by the public Internet.

Any policies defined by stakeholders at a higher level of the hierarchy will be inherited by the stages below. Security managers can create new policies at any level – organization, tenant, or workspace. The concept of multi-tenancy provides easy management of authorization policies, access control policies, and user policies, organization-wide and locally.

Securing multicloud and multicluster communication through mTLS

To secure data in transit and user access, the TSB management plane allows the configuration of runtime policies such as mTLS and end-user authentication policies based on JSON Web Tokens (JWT). TSB also allows configuring runtime access policies with service identities.

mTLS authentication for data encryption

TSB offers Istio peer-to-peer authentication resources to verify clients making a connection. It allows you to implement mTLS authentication in your service mesh by Envoy proxies, a small application working alongside each service (also known as sidecar proxy). The client-side Envoy proxy completes hand-shaking with the service-side Envoy proxy, and only when the mutual TLS connection is established (refer Fig F) does traffic flow between the client side and the server side (refer to the image below).

Figure F: Mutual handshaking based on SSL certificates between two applications using Envoy proxy.

mTLS-based authentication is also called peer-to-peer (P2P) authentication and does not require changes to application code. mTLS-based authentication gives each service a strong identity, enabling interoperability across clusters and multi-cloud. Security managers can now define mTLS based authentication policies in the TSB management plane to encrypt service-to-service communication in the network, helping to prevent man-in-the-middle attacks.

TSB provides a certificate management system to automatically generate, distribute and rotate private keys and certificates to decrypt the requested data.

JWT based authentication for user access

For end-user authentication to verify the credential attached to a request, TSB provides existing Istio resources (also called Request Authentication). Security managers can now leverage Istio resources to verify credentials by validating the JSON web token (JWT). The token will have the location of the token, issuer details, and a public JSON web key set. Security managers can specify authentication policies and rules per their organization standards, and TSB will reject or accept user requests based on the match of the token with the policies.

Since TSB global management uses Istio, it allows you to connect with authentication providers of your choice, such as OpenID Connect providers, for example, KeyCloak, OAuth 2.0, Google Auth, Firebase Auth, etc.

Access policies to apply RBAC for users

As mentioned earlier in the multi-tenancy, every resource within TSB has an access policy. The access policy describes a user’s privileges on a resource along with other conditions. TSB provides the capability to integrate with your corporate identity provider (e.g., LDAP or OIDC) and to import your business structure, such as users and groups. TSB allows security managers to map access control policies with the users and groups. The RBAC policies will implement governance, prevent users from affecting resources from other teams, and help organizations adhere to compliance.

Audit and real-time visibility

Tetrate Service Bridge (TSB) allows security managers to proactively monitor and measure the integrity and security posture of microservices. The TSB control plane generates runtime telemetry, which helps security personnel, network administrators, and SREs track service behavior.

Besides developing metrics, TSB also provides runtime observability, as traffic flows to each service. The TSB management plane provides visibility into information such as who is authorized to use services, what is being encrypted, etc.

The security team can now see how each service interacts with other services. In case of a malicious attack, they can quickly isolate subverted applications and then prepare patches to implement. Furthermore, TSB generates audit logs for a chosen time period that provide a complete view of each access to information. Audit logs help auditors and security managers trace potential security breaches or policy violations and quickly find the root cause of issues.

Service identities in runtime for securing services in a cluster

Because Tetrate Service Bridge (TSB) is built on Istio, by default it provides secure naming to ensure workloads (VMs and pods) belong to the same microservice. TSB creates service identities for each workload (VMs or pods) and stores the necessary information in a secure name table. Server identities are encoded in certificates, but service names are retrieved through the discovery service or DNS. The secure naming information maps the server identities to the service names. A mapping of identity from (say) service A to service name B means “service A is authorized to talk to service B.”

This service-to-service authorization can be used in runtime policies and provides even greater control over your network security. TSB leverages SPIFFE for issuing identities to all the workloads across heterogeneous environments in the mesh.

With TSB, it becomes very easy for app developers and security managers to define more granular policies such as which services can speak to others and on what terms, etc. Such a definition would limit the surface area of the attack and reduce the impact of security breaches.

Since Istio rotates certificates between services frequently, an attacker will find it extremely difficult to steal credentials.

Since TSB decouples those policies from the underlying network and infrastructure, the policies are portable across environments (cloud, Kubernetes, on-prem, VM).

If you want any help in abstracting security from the current application development, and help security managers to implement and manage all the compliance and security policies, feel free to contact us.

To find out how TSB can help you solve security challenges from Day-1, please book a demo with us.