Use Tetrate’s Open Source Istio Cost Analyzer to Optimize Your Cloud Egress Costs

Who Is This For? You should read this if you run Kubernetes and/or Istio on a public cloud, and you care about your cloud bill. Cloud providers charge

Who Is This For?

You should read this if you run Kubernetes and/or Istio on a public cloud, and you care about your cloud bill. Cloud providers charge money for data egress, including data leaving one availability zone destined for another. If your Kubernetes deployments span availability zones, you are likely being charged for egress between internal components. Even if you don’t run Kubernetes/Istio, you’ll still run into cross-zone data egress costs, which this article will help you understand and minimize.

If you want to get started with a production-ready Istio distribution today, try Tetrate Istio Distro (TID), the easiest way to install, operate, and upgrade Istio.

Your Cloud Bill Nightmare

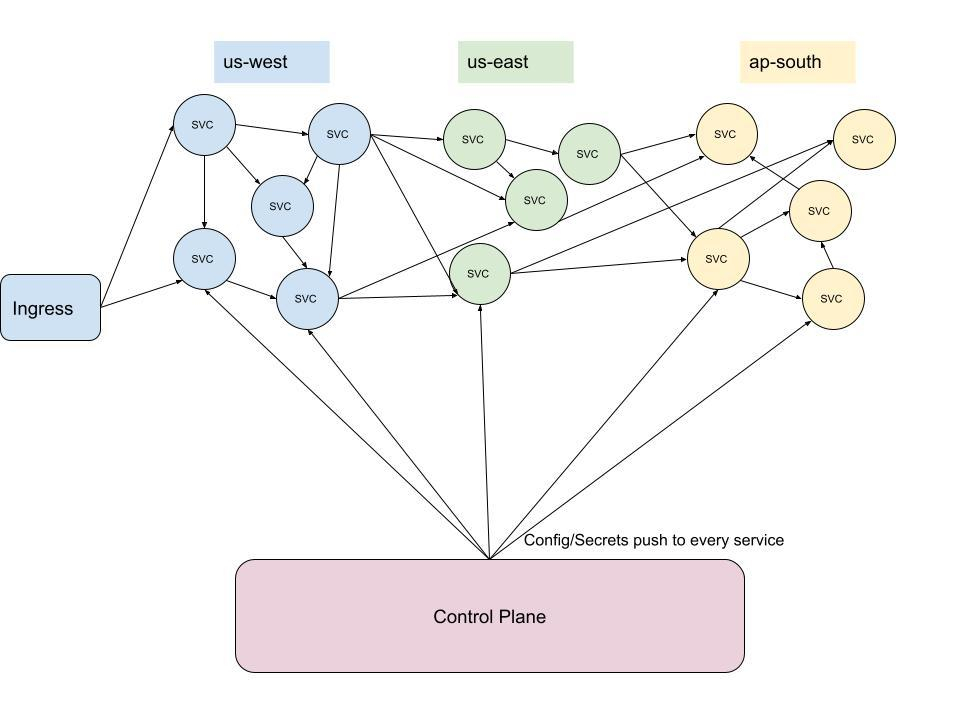

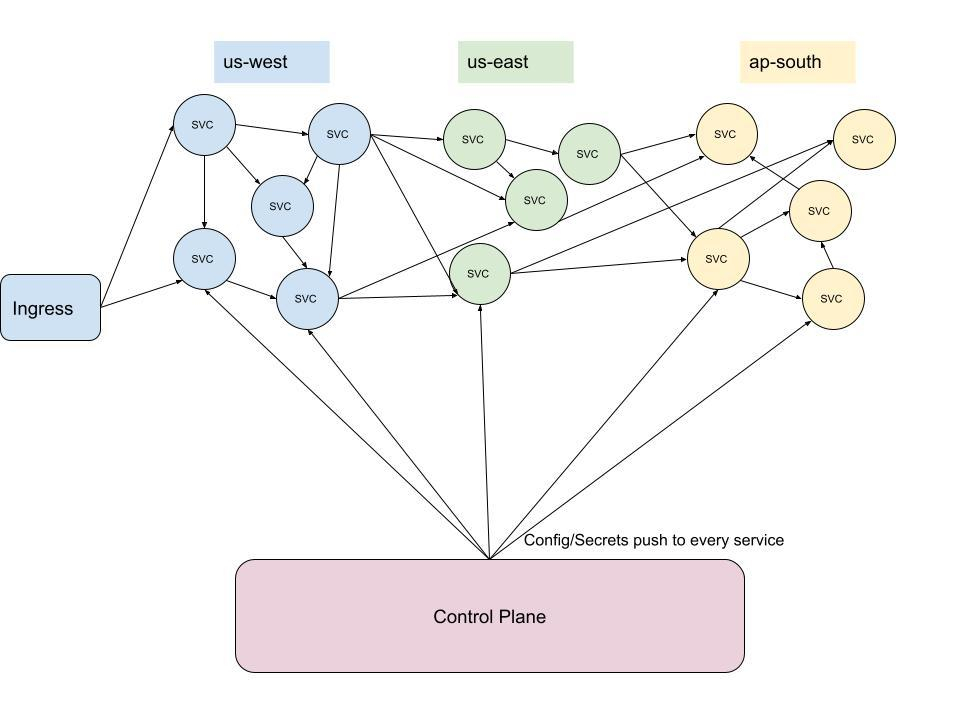

As the number of services in modern deployments gets larger and larger, the amount of east-west (internal) traffic scales quadratically, and possibly more. We can prove this with some simple math:

In a service mesh with N workloads, the maximum number of connections formed between workloads is N * (N-1), and because we are counting a connection as both request and response, we must divide by two, making the maximum number of connections (N2 – N) / 2. This doesn’t even take into account calls between the control plane and proxies, which will scale linearly. So, just one external call results in approximately N^2 internal calls.

The only way to come close to completely avoiding cross-zone charges would be to run a cluster—including a replicated data source—in every availability zone. Even then, data replication would cost money, since change in state would need to be reflected globally. You simply can’t avoid egress charges.

When your mesh is spread across various geographical regions to meet availability requirements, the substantial amount of internal traffic has a cost. Cloud providers will charge a few cents per gigabyte of cross-region or cross-zone traffic, which can lead to some unexpected cloud bills for large, multi-locality distributed systems. As your deployment scales, so does your cloud bill, in ways that can be avoided.

Your cloud bill nightmare:

Well, if we can’t avoid these costs altogether, we should at least track them with an eye towards cost optimization.

Introducing the Istio Cost Analyzer

To help our customers—and now, the Istio community at large—analyze and reduce the cost of data egress in their cloud deployments, we built the Istio Cost Analyzer, an open source tool that gives you complete visibility into the data transfer costs inside your mesh. Setting up the tool takes just one command, and running the tool is also just one command. In this article, we will present a quickstart tutorial, as well as review the inner workings of the tool.

Quickstart

You must have a Kubernetes cluster running with Istio installed. You must also have a healthy Istio Operator and a Prometheus deployment running.

Install

Install the cost analyzer with the following command:

go install github.com/tetratelabs/istio-cost-analyzer@latestSetup

Once installed, run the setup command:

istio-cost-analyzer setupThis command sets up for analysis in the default namespace. To change namespace to be analyzed, set the --targetNamespace flag. The default configuration also assumes there is a running Prometheus deployment in the istio-system namespace. To change this, set the --promNs flag.

The cost analyzer will set your mesh up to collect cost-relevant data from the point the setup command is run, so you are able to analyze costs from this point onward.

Run

Run the cost analyzer with the following command:

istio-cost-analyzer analyzeYour output should look something like:

Total: -

SOURCE SERVICE SOURCE LOCALITY COST

reviews-v3 us-west1-b -

productpage-v1 us-west1-b -

reviews-v2 us-west1-b -For more information, use the --details flag:

Total: -

SOURCE SERVICE SOURCE LOCALITY DESTINATION SERVICE DESTINATION LOCALITY TRANSFERRED (MB) COST

reviews-v2 us-west1-b ratings-v1 us-west1-b 0.000655 -

reviews-v3 us-west1-b ratings-v1 us-west1-b 0.001310 -

productpage-v1 us-west1-b details-v1 us-west1-b 0.011250 -

productpage-v1 us-west1-b reviews-v1 us-west1-b 0.004500 -

productpage-v1 us-west1-b reviews-v2 us-west1-b 0.002250 -

productpage-v1 us-west1-b reviews-v3 us-west1-b 0.004500 -To analyze data within a time range, use the --start and --end flags which both take a timestamp in RFC3999 format.

How the Analyzer Works

Behind the scenes, the cost analyzer uses a modified version of the Prometheus metric istio_request_bytes_sum to track data transfer. It uses this data, along with publicly available (although not entirely straightforward) cloud data transfer rates to generate a data transfer cost report.

The cost tool labels all pods within your target namespace with a locality label, and annotates all of your deployments with the sidecar.istio.io/extraStatTag annotation to support custom Prometheus metric labels. Using a mutating webhook, the tool also labels all new pods/deployments from the time the setup command is called.

Mutating Webhook

In order to label/annotate deployments/pods that already exist and that are created in the future, the cost analyzer uses a mutating webhook. The cost-analyzer-mutating-webhook deployment sits in the istio-system namespace, and when deployed:

- Labels all the pods with a locality label, whose value is derived from respective node information.

- Annotates all deployments with the

sidecar.istio.io/extraStatTagannotation, whose value is justdestination_locality. - Runs a concurrent informer to update all new pods with the locality label.

- Exposes a webhook endpoint for all new deployments to get annotated with the

sidecar.istio.io/extraStatTagannotation.

Istio Operator

The setup command also edits the Istio Operator Prometheus configuration to add a new label to the istio_request_bytes metric. The following configuration is added:

spec:

values:

telemetry:

v2:

prometheus:

configOverride:

outboundSidecar:

metrics:

- dimensions:

destination_locality: upstream_peer.labels['locality'].value

name: request_bytesspec:

values:

telemetry:

v2:

prometheus:

configOverride:

outboundSidecar:

metrics:

- dimensions:

destination_locality: upstream_peer.labels['locality'].value

name: request_bytesThe added Operator configuration essentially specifies a new dimension for the istio_request_bytes metric, and the value of this dimension is the locality label of the peer. Note that the pod labeling that the webhook does sets this value.

Rate Data

The network data transfer costs are available on the AWS/GCP/Azure websites. The cost tool uses the following data sources:

- GCP: https://cloud.google.com/vpc/network-pricing#all-networking-pricing.

- AWS: https://aws.amazon.com/ec2/pricing/on-demand/.

Cloud providers generally only charge for egress data transfer, meaning we only need to track outgoing connections (which we do with the outboundSidecar configOverride).

The cost tool uses its own custom scripts to normalize this cloud rate data into a standard format, which can be seen in the pricing format explanation. Essentially, all transfer data is represented as a nested map, where the first layer is the source locality, and the second layer is the destination locality. Here is a JSON representation:

{

"us-west1-b": {

"us-west1-c": 0.05,

"ap-south1-a": 0.13

}

}This example represents two data points: a rate for a call from us-west1-b to us-west1-c (0.05) and from us-west1-b to ap-south1-a (0.13). The rates are in dollars per gigabyte.

Custom Rate Data

The cost tool works out of the box with AWS and GCP. If you want to add custom rates (running the cost tool with an unsupported cloud or with a negotiated rate), you can represent your rates in the file format described in the pricing documentation.

The Vision

The Istio Cost Analyzer is in its early stages. It currently only supports analysis within a single cluster on both AWS and GCP. Although this is useful, there’s a lot of potential for improvement.

Short-Term Plans

The cost analyzer should be an extremely reliable observability tool. This includes not just raw cost data, but also actionable insights into optimizing your cluster for network cost. To that end, we plan to build an “insights” feature that will take into account service dependencies and suggest actions you can take to reduce absolute costs. We imagine these insights will look something like this:

Total: <$0.01

SOURCE SERVICE SOURCE LOCALITY DESTINATION SERVICE DESTINATION LOCALITY TRANSFERRED (MB) COST

reviews-v2 us-west1-c ratings-v1 us-west1-b 0.000655 <0.01

reviews-v3 us-west1-b ratings-v1 us-west1-b 0.001310 -

productpage-v1 us-west1-b details-v1 us-west1-b 0.011250 -

productpage-v1 us-west1-b reviews-v1 us-west1-b 0.004500 -

productpage-v1 us-west1-b reviews-v2 us-west1-b 0.002250 -

productpage-v1 us-west1-b reviews-v3 us-west1-b 0.004500 -

Insights found: 1

- Move reviews-v2 from us-west1-c to us-west1-b: Brings cost from <$0.01 to $0.00.There are other forms of cost too, though. Latency between regions is often overlooked, but in the end, it’s a cost to the user. A future iteration of the cost tool should also take into account latencies between localities. For AWS, these are publicly available at https://www.cloudping.co/grid. There is a tradeoff between cost and latency, and that should be worked into the insights.

Long-Term Plans

We think the ideal cost tool will also be a Kubernetes scheduler plugin, which takes into account the cost of adding a service in a certain locality, based on historical data. This plugin could be weighted based on whatever the cluster administrator prefers, where the tradeoff is between cost and latency/downtime. The current observability part of the cost tool would run separately, and can still be read from by users of the CLI, but would also be read from by the custom scheduler plugin. It would take action based on the insights found by boosting the scheduling score of Nodes that, if the Pod was scheduled on, would decrease egress cost.

All of this would run in the background, and the tool would offer an audit subcommand, which would show all scheduling actions influenced, a detailed analysis of each influence, and a total amount saved by the scheduler. This amount is derived by taking the amount of egress cost the cluster would have had if the Pod was scheduled to the Node it would have been scheduled to without the tool’s influence, and subtracting the current (influenced) cost.

We imagine an audit function to look something like this:

$ istio-cost-analyzer audit

Insights actioned on: 3

[07/29/22] Scheduled reviews-v2 to node xyz in us-west1-c instead of node abc in us-west1-b. Saved: $315.12.

[08/01/22] Scheduled details-v1 to node foo in ap-south1-a instead of node bar in us-west1-c.

Saved: $110.95.

[08/02/22] Scheduled reviews-v1 to node abc in us-west1-b, instead of node def in us-east1-c.

Saved: $255.48.

Total saved by scheduler: $681.55Try It Out!

The cost analyzer needs users! It’s easy to install and run, takes up minimal resources, and offers an excellent report on your costs. We hope you find it useful and we welcome contributions from the world, especially cost data from the other cloud providers that don’t yet work out of the box.

Try the Istio Cost Analyzer now ›

If you want to get started with a production-ready Istio distribution today, try Tetrate Istio Distro (TID). TID is a vetted, upstream distribution of Istio that is simple to install, manage, and upgrade with FIPS-certified builds available for FedRAMP environments. If you need a unified and consistent way to secure and manage services across a fleet of applications, check out Tetrate Service Bridge (TSB), our comprehensive edge-to-workload application connectivity platform built on Istio and Envoy.