LLM Gateway Use Cases

Unified Model Access

Use a single key to access every LLM.

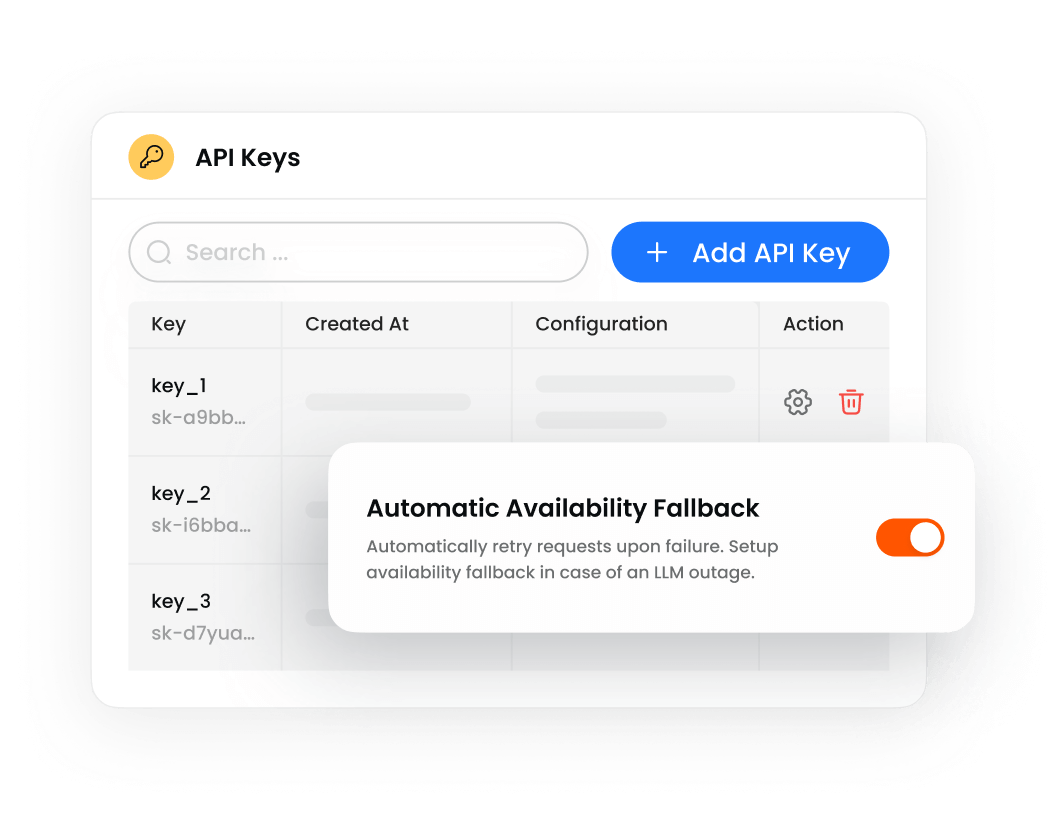

Automatic Fallback

Retry or failover automatically in case of an LLM outage.

Cost Management

Optimize costs with intelligent request routing.

Get an approved LLM catalog, unified model access, automatic fallback, & cost management

Use a single key to access every LLM.

Retry or failover automatically in case of an LLM outage.

Optimize costs with intelligent request routing.

Get access to an extensive library of LLMs. Curate your catalog with an approved list of LLMs of your choice.

Set rules for automatic retry or failover in case of response failure. Set routing rules based on token limits or request types.

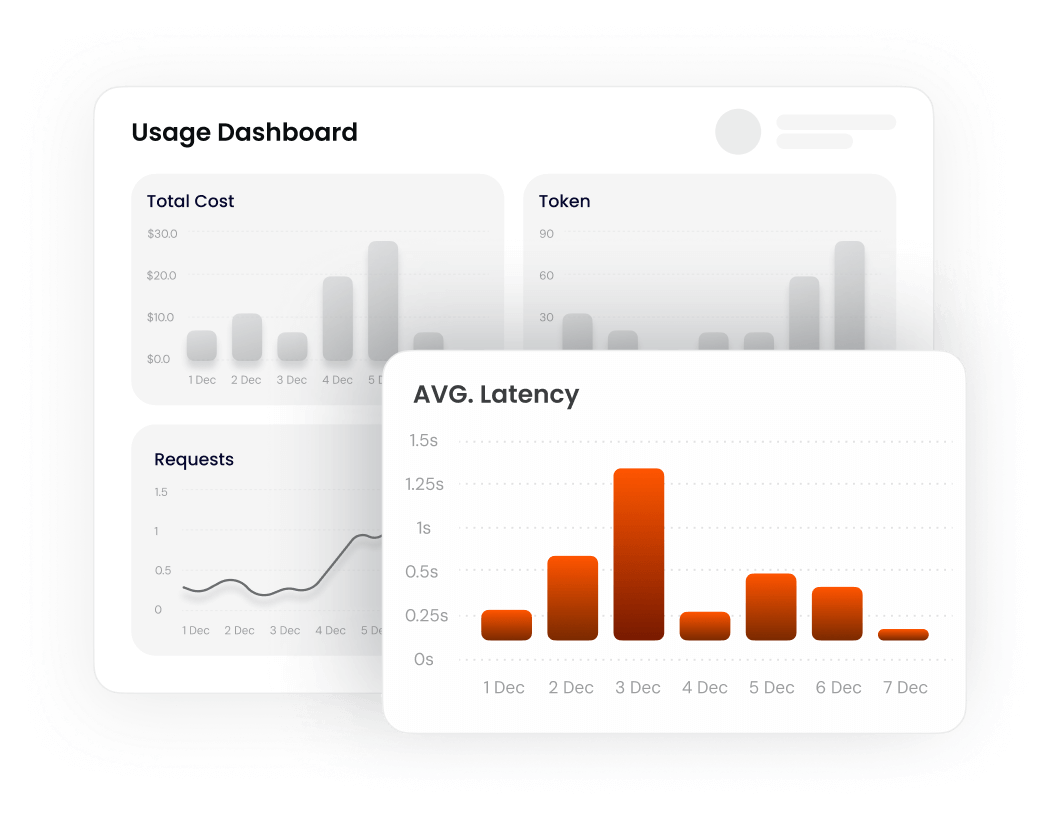

Gain insight on token usage and app consumption. Optimize for costs, performance, and/or speed.

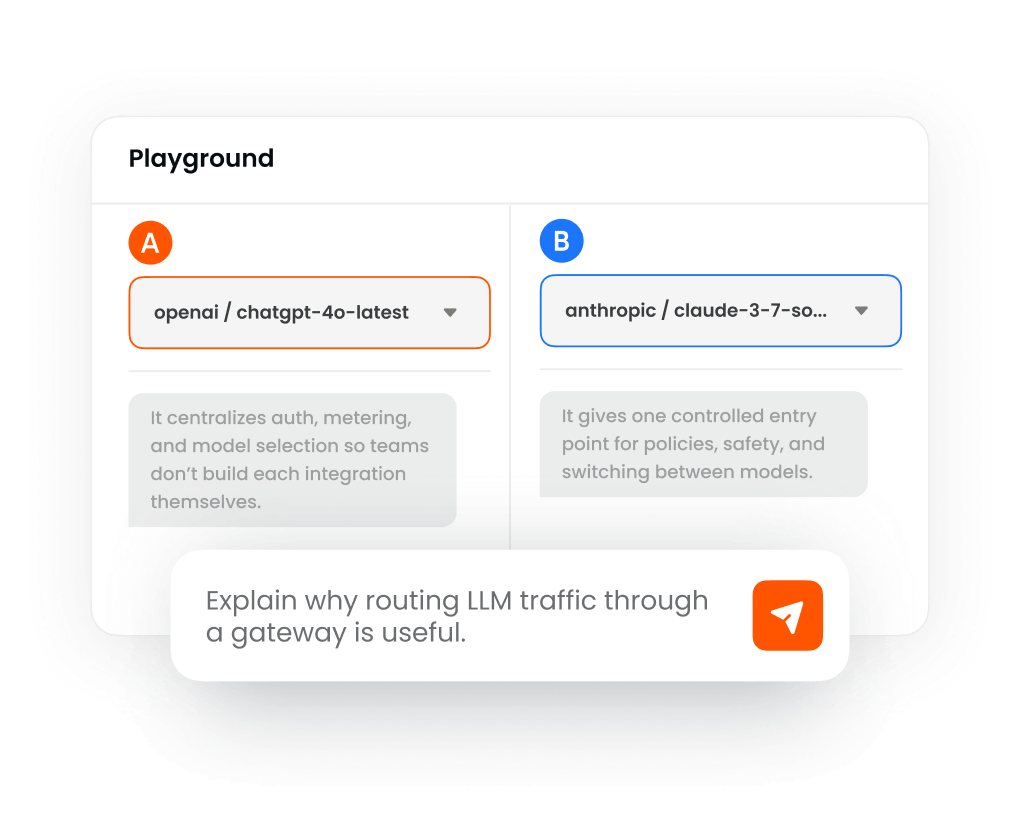

Experiment with outputs from alternative LLMs to rapidly test and compare model response quality.

One, Unified API

Access OpenAI, Claude, Gemini, Mistral, & custom models.

OpenAI-compatible interface

Trace AI ownership through traffic metadata analysis.

Model Lifecycle Management

Inventory across end user devices, applications, models, and providers.

Regional Routing

Audit-ready architecture to record and capture evidence

Distributed Traffic Management

Region-aware load balancing across model deployments & session stickiness for conversational agents.

Resilient Request Handling

Retry / fallback / failover policies to maintain continuity.

Intelligent Routing Strategies

Dynamic routing based on cost, latency, policy with traffic splitting for migrations and evaluation.

Enforce Usage Limits

TPM / RPM / concurrency controls with user and group rate limits.

Track Cost + Consumption

Token usage, spend visibility, and detailed reporting.

Control Team Budgets

Configurable budget thresholds and policy-based enforcement.

Measure AI Performance

Per-model latency metrics and real-time visibility.

Trace every request

Distributed tracing, correlation IDs, and OpenTelemetry export.

Understand usage patterns

Analytics dashboards for consumptions, trends, and ops insights.

Flexible Deployment Options

Managed, hybrid, or self-hosted in your cloud accounts.

Operate Across Regions

Multi-region data planes, centrally coordinated.

Private networking

Run on private enterprise networks. Isolated infrastructure by default.