AI Gateway

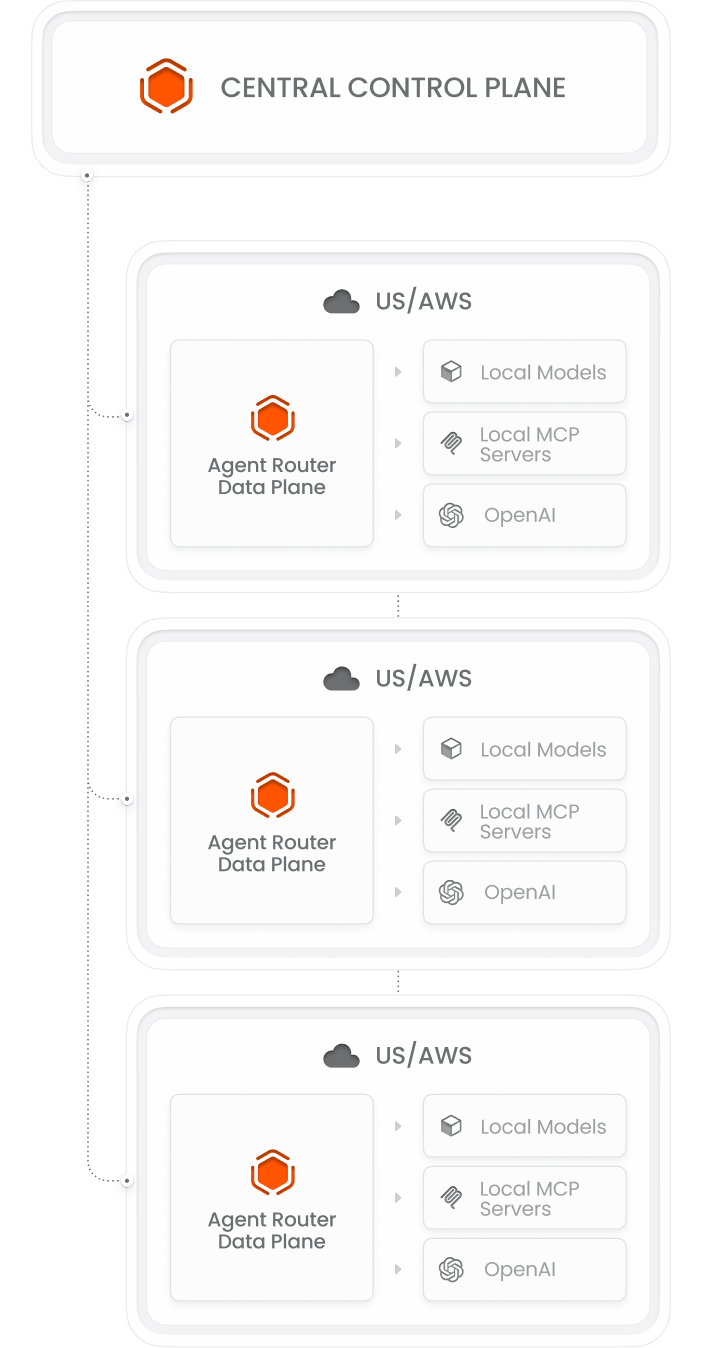

Routes all LLM traffic across your agents, handles provider failover, enforces token budgets, and produces unified logs across every team and provider. The core of Agent Router.

- Multi-model routing with automatic failover

- Per-team, per-agent token budgets enforced inline

- Unified logs across all providers and frameworks

- OpenAI-compatible API, works with existing code

- Enterprise SSO for team and user attribution