Built for Production Engineers

Ideal for teams focused on throughput, reliability, and consistent performance in real-world environments.

LiteLLM’s Python proxy bottlenecks as agents grow.

Agent Router is built on Envoy for high performance under load.

Built on Envoy and Golang — the same distributed systems stack powering production infrastructure at scale. Designed for enterprise delivery, not prototyping.

Python-based library and proxy server optimized for developer experimentation. Best suited for teams prototyping, not running production workloads at scale.

Purpose-built for enterprise operators: dedicated admin UX, immutable audit logs for EU AI Act compliance, and governance controls designed for regulated industries — not retrofitted from an OSS project.

Community-driven feature roadmap prioritizes GitHub star breadth over enterprise depth. Admin UX is developer-first; enterprise governance features require significant custom configuration.

Production-ready MCP Gateway with a curated server catalog, MCP Profiles for bundling tool sets, OAuth and API key authentication, and unified metrics and observability — all available today.

MCP support is experimental. Not recommended for teams that need stable, auditable tool access in production agent workflows.

Proven track record operating critical infrastructure in regulated industries — including CVE remediation, compliance audits, and SEV0 incident response. Built by the team that co-created and maintains Envoy AI Gateway.

Self-hosted Python proxy with no enterprise SLAs, no published audit trail capabilities, and limited third-party validation for supply chain or operational readiness in regulated environments.

Are you seeing latency spikes above a few hundred RPS?

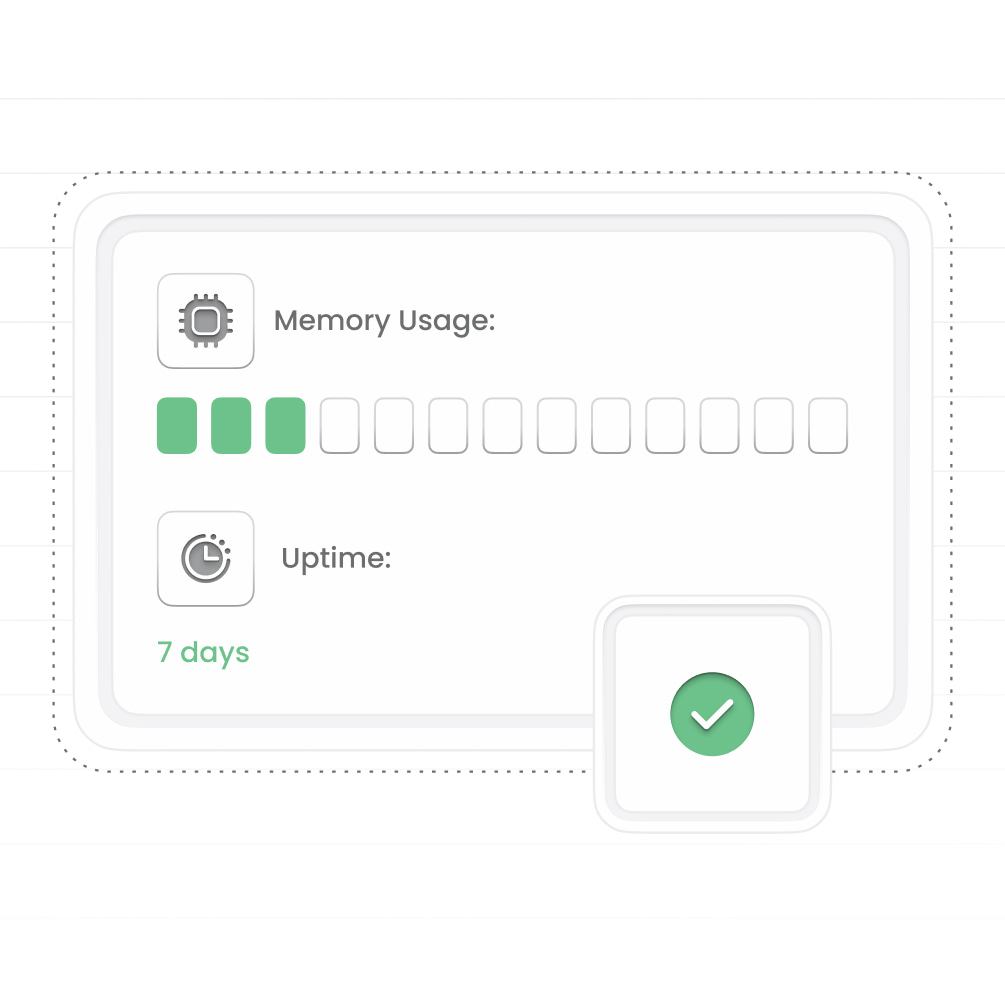

Do you need to restart your gateway regularly?

Are your rate limits reliable under concurrency?

Is logging affecting performance?

If yes, then you need an enterprise grade gateway

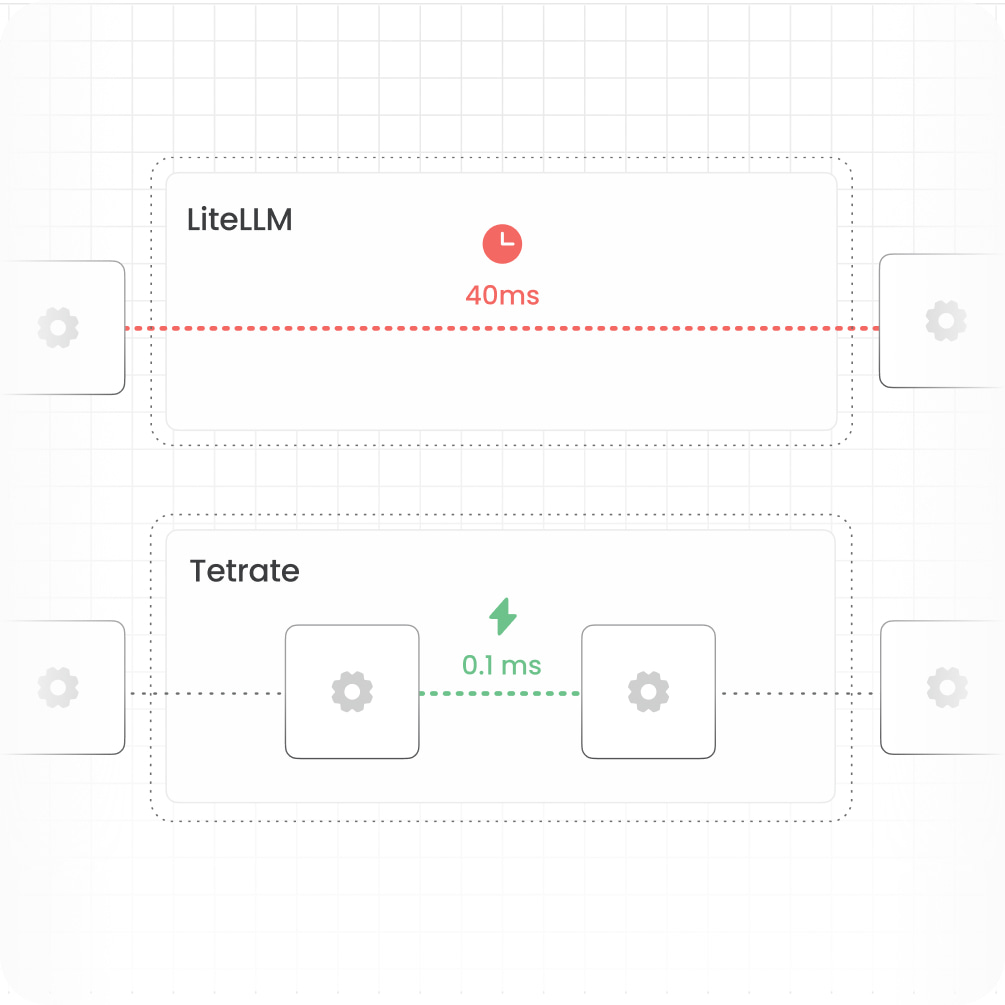

LiteLLM

LiteLLM begins to bottleneck beyond ~300 RPS. In documented cases, latency degraded from 200 ms to over 12 seconds under load, and adding more instances did not resolve the issue.

Tetrate Agent Router

Built on the Envoy proxy, Tetrate Agent Router is proven at high throughput in production, handling significantly higher RPS with consistent performance.

LiteLLM

Under sustained traffic, LiteLLM containers can grow to 12 GB and crash. Teams end up relying on scheduled restarts every 6 to 8 hours just to keep the gateway healthy.

Tetrate Agent Router

Agent Router improves runtime stability for long-lived deployments, reducing forced restarts, OOM events, and performance drift over time. Envoy gives Agent Router a more deterministic memory profile.

LiteLLM

Performance can degrade once log volume grows large, with latency rising as operational history accumulates.

Tetrate Agent Router

Logging stays off the critical path. Logs are written asynchronously, while policies and pricing remain cached in memory so requests do not wait on database calls.

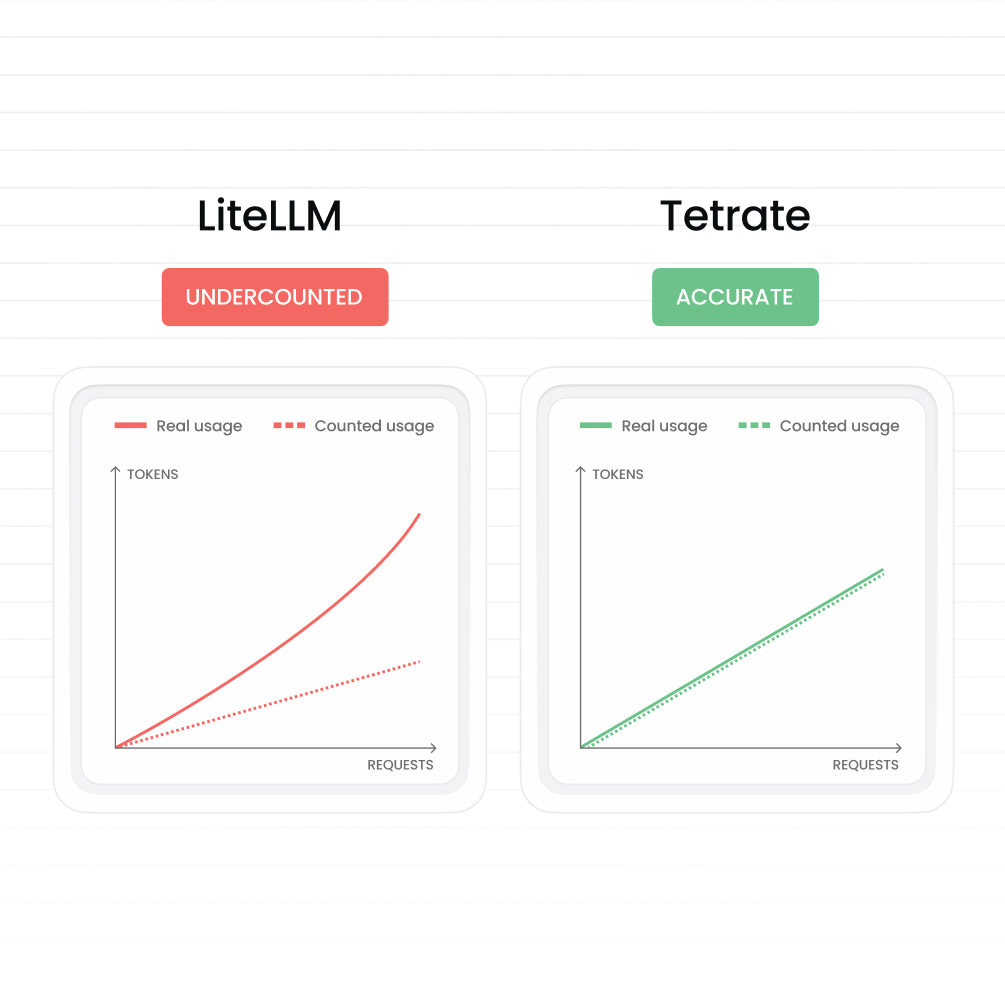

LiteLLM

Usage is counted after the call completes, so concurrent requests can exceed limits before records catch up.

Tetrate Agent Router

Limits and budgets are enforced before execution. Token reservations happen atomically, so teams can trust controls even under concurrency.

LiteLLM

Per-request overhead can add meaningful latency, especially in agent loops and multi-step workflows where delays stack up.

Tetrate Agent Router

The request path stays lean, with near-zero added latency, so multi-step agent workflows remain fast and responsive.