Envoy Proxy

What Is Envoy Proxy and Why Do You Need It?

Many organizations adopt the microservices architecture to deliver superior customer service using technologies such as cloud and containers. However, they face operational challenges such as controlling, monitoring, and ensuring the security of their microservices. Some of the common challenges are:

- Maintaining network in the heterogeneous system: All their microservices would be heterogeneous in nature, which means that they are built using different languages, libraries, and frameworks. So network configuration such as rate-limiting, circuit breaking, timeouts, etc., are done as per the language. It becomes challenging for the team to manage and maintain the network centrally and keep it safe and secure.

- Difficulty in monitoring traffic: It is very difficult for architects to implement stats, logging, and tracing across different services and network infrastructures such as load balancers. Without standardization, it becomes daunting to trace the root cause of a problem in a network by scanning the health of multiple services separately and identifying the point of failure.

- Scaling microservices is a pain: Scaling application features becomes challenging as developers spend most of their time writing network or security logic. Oftentimes, developers are frustrated because they have to spend time debugging the network or writing security policies instead of spending time on business logic.

Lyft built Envoy proxy to overcome those challenges in microservices. Envoy proxy acts as a data plane for large-scale microservice architecture to manage network traffic and also ensure security.

Tetrate offers an enterprise-ready, 100% upstream distribution of Envoy Gateway, Tetrate Enterprise Gateway for Envoy (TEG). TEG is the easiest way to get started with Envoy for production use cases. Get access now ›

Introducing Envoy Proxy

Envoy is an open-source edge and service proxy designed for cloud-native applications. It’s written in C++ and designed for services and applications, and it serves as a data plane for service mesh.

The notion is to run Envoy as a sidecar next to each service in an application, abstracting the network from the core business logic. Envoy provides features like load balancing, resiliency features such as timeouts, circuit breakers, retries, observability and metrics, and so on. The best part is, one can use Envoy as a network API gateway. These APIs are called discovery services, or xDS for short.

In addition to the traditional load balancing between different instances, Envoy also allows you to implement retries, circuit breakers, rate limiting, and so on. Also, while doing all that, Envoy collects rich metrics about the traffic it passes through and exposes the metrics for consumption and use in tools such as Grafana, for example.

There are a few alternatives to Envoy proxy, such as Rust Proxy (Linkered is built on it), NGINX Proxy, HAProxy, etc.

Features of Envoy Proxy

Envoy provides a number of benefits that make software development and delivery faster, easier, more reliable and more secure. Following is a brief description of key capabilities that you can expect Istio and Envoy software to provide:

Out-of-Process Architecture

Envoy proxy is designed to run alongside every service. And all of the Envoy proxies form a transparent mesh for communication among services or between service and external clients without the service being aware of the network topology. Envoy works with services written in any language, such as Java, C++, Go, PHP, Python, etc., transparently connecting services and eliminating the need for language-specific communications frameworks. Envoy is also easy to deploy across the entire infrastructure and can be upgraded dynamically.

L3/L4 Filter Architecture

t its core, Envoy is an L3/L4 network proxy, i.e., it helps in facilitating the communication in the network and transport layers. A platform team can use built-in filters to perform different tasks with Envoy, such as serving as a raw TCP proxy, a UDP proxy, an HTTP proxy, a TLS client certificate authentication authority, etc.

HTTP L7 Filter Architecture

Envoy supports HTTP L7 filter layer, i.e., facilitates application layer communication. Infrastructure and platform teams can use HTTP filters in the HTTP connection management subsystem to perform tasks such as buffering, routing/forwarding, rate limiting, sniffing Amazon’s DynamoDB, etc.

HTTP/1.1 and HTTP/2 Support

Envoy supports both HTTP/1.1 and HTTP/2 protocols out of the box and bridges the communication channel between client and target servers. Envoy also supports gRPC, which is based on the HTTP/2 protocol. It can be used as the routing and load balancing substrate for gRPC requests and responses.

HTTP/3 Support

Since the 1.19.0 release, Envoy has supported HTTP/3 protocols for upstream and downstream communication. Envoy can also help in translating communication between any combination of protocols- HTTP/1.1, HTTP/2, and HTTP/3.

HTTP L7 Routing

When operating in HTTP mode, Envoy offers a routing mechanism to redirect requests based on parameters such as path, authority, content type, runtime values, etc.; platform teams find this useful when they want to use Envoy as a front/edge API

gateway.

Service Discovery and Dynamic Configuration

Envoy uses a layered set of dynamic configuration APIs for service discovery. The layers provide dynamic updates such as host information, backend clusters, listening sockets, HTTP routing, and cryptographic items. For a simpler deployment, backend host discovery can be made through DNS resolution, with further layers replaced by static configuration files.

Health Checking

Envoy offers health checking to perform active health checks of all the services in clusters. Envoy automatically decides how to load balance based on service discovery and health check data.

Advanced Load Balancing

Since Envoy is a proxy rather than a library, it is able to provide an advanced load balancing mechanism for distributed applications. Some of the advanced load balancing and traffic management techniques include automatic retries, circuit breaking, request shadowing, rate limiting via an external rate-limiting service, and outlier detection.

Front/Edge Proxy Support

There is substantial benefit in using the same software at the edge (observability, manageability, maintainability , identical service discovery and load balancing algorithms, etc.). Envoy has a feature set that makes it well suited as an edge proxy for most modern web application use cases. This includes TLS termination, HTTP/1.1 HTTP/2 and HTTP/3 support, as well as HTTP L7 routing.

Observability

Envoy proxy offers statistics, access logging, and distributed tracing for SREs to ensure the smooth operation of the service mesh. Envoy provides statistics for downstream (an external client sending request), upstream (proxy receiving downstream requests), and server (for processing client requests) to help SREs understand network traffic and how the Envoy server is working. Envoy proxy allows logging and tracing functionality via third-party tools. Using logging and tracing, SREs and the infrastructure team can obtain information about, and visualize call flows in distributed systems and understand serialization, parallelism, and sources of latency.

Use Cases for Envoy Proxy

Envoy proxy has two common uses, as a service proxy (sidecar) and as a gateway.

Envoy As a Sidecar for Service-to-Service Communication

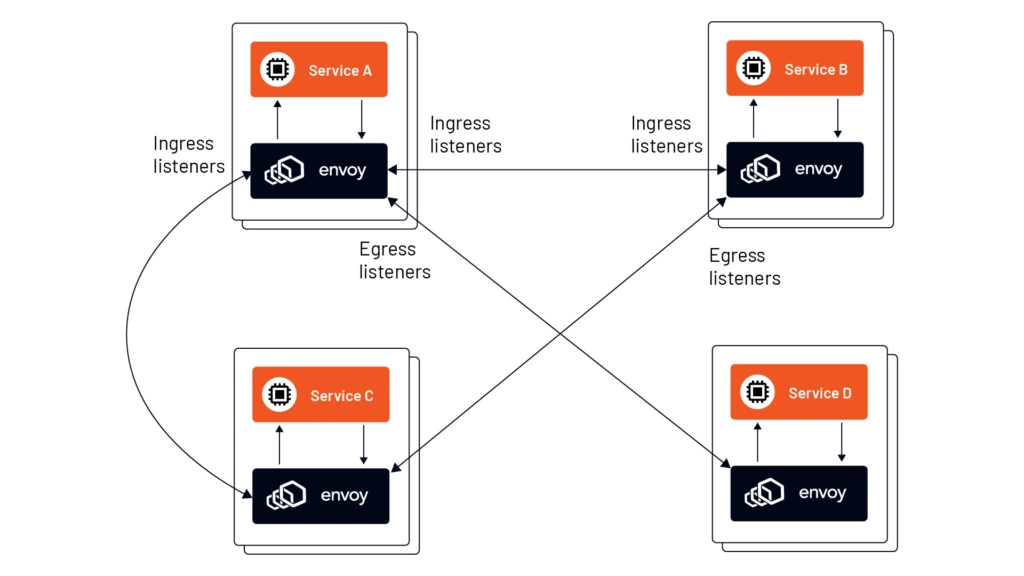

Envoy can act as an L3/L4 application proxy or sidecar proxy for enabling communication among services. The Envoy proxy instance would have the same lifecycle as that of the parent application. This way, one can extend applications across different technology stacks, including legacy applications that offer no extensibility.

All the requests to the application would arrive through Envoy via listeners such as:

- Ingress: Ingress listeners take requests from other services in a service mesh and forward them to the local application the Envoy instance is sidecar to.

- Egress: Egress listeners take requests from the local application the Envoy instance is sidecar to and forwards the requests to other services in the network.

The figure below shows how the Envoy proxy can attach to the application to enable communication using ingress and egress listeners.

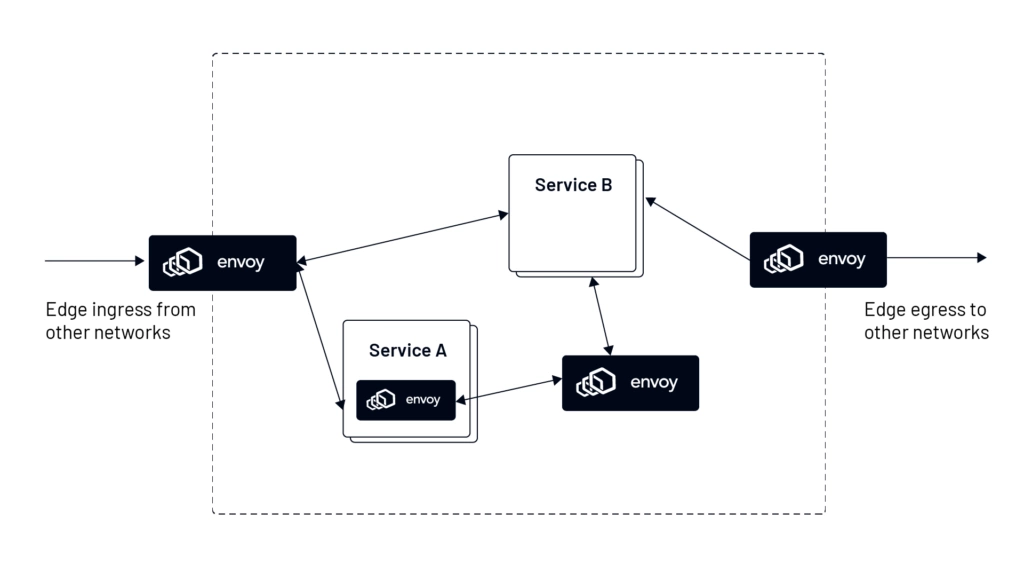

Envoy as API Gateway for Inbound Traffic

Envoy proxy can act as an API gateway which sits as a ‘front proxy’ between the client request and the application. Envoy will accept inbound traffic, collate the information in the request, and direct it to where it needs to go inside a service mesh. This image below demonstrates the use of Envoy as a ‘front proxy’ or ‘edge proxy’, which will get requests from other networks. As an API gateway, the Envoy proxy is responsible for functionality such as traffic routing, load balancing, authentication, and monitoring at the edge.

Envoy for Kubernetes Ingress

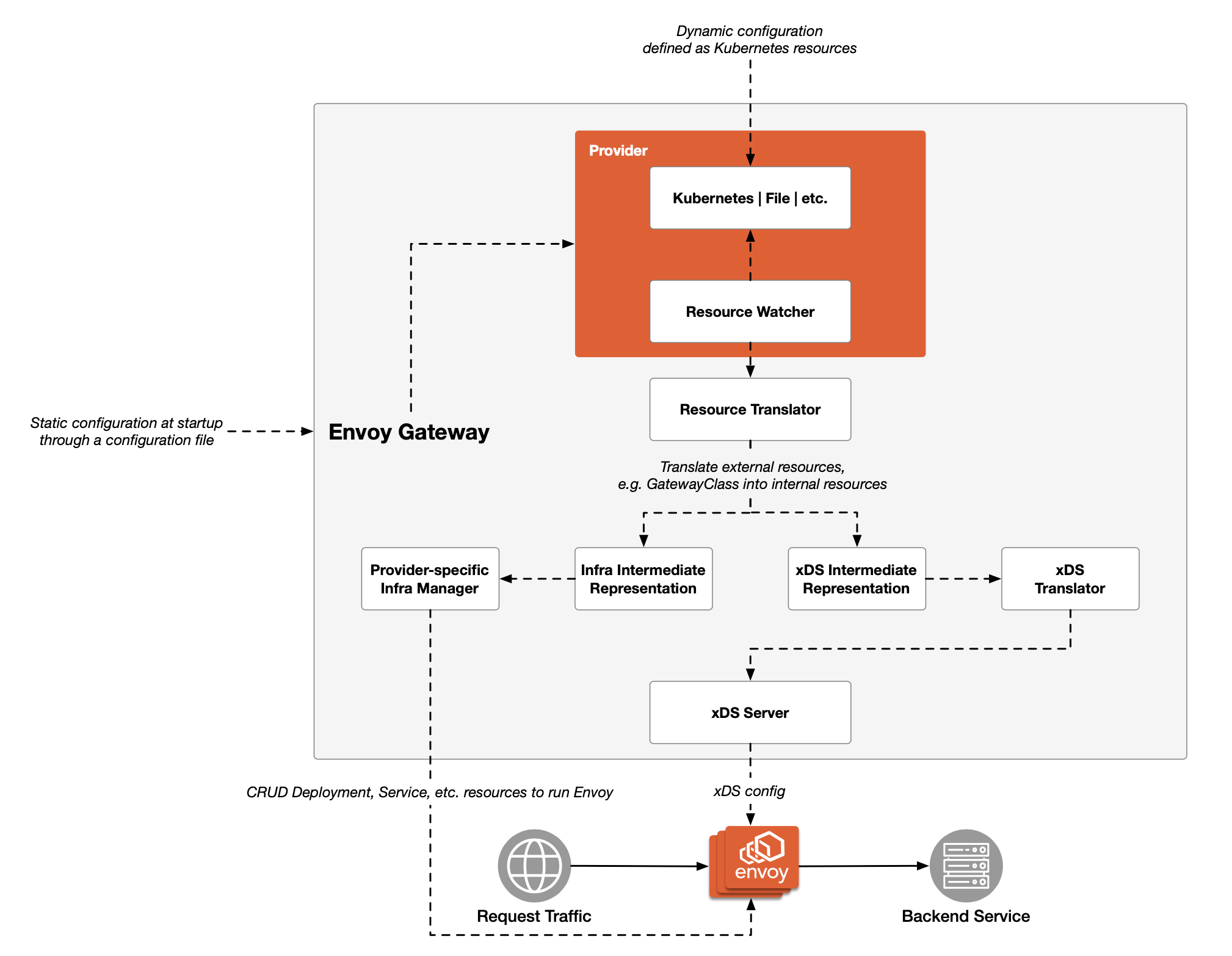

Envoy Gateway is an open source project that aims to make it easier to use Envoy as an API Gateway by delivering a simplified deployment model and API layer, especially Kubernetes ingress.

Benefits of Envoy Proxy

Platform teams that want to abstract the network from the application benefit the most from implementing Envoy proxy. With the support for the latest network protocols, such as HTTP/1.1, HTTP/2, and HTTP/3, as well as L3/L4 protocols such as TCP/UDP, Envoy is very useful for traffic management for cloud-native applications. Here are key benefits of using Envoy proxy:

Abstract your network from the application

With Envoy as a highly optimized out-of-process service proxy, you can use it alongside any heterogeneous services, including containers and VMs based applications, and facilitate communication locally.

Define granular traffic controls

With support for L3/L4 network layer and L7 network layer, you can easily configure network functions like load balancing, circuit breakers, retries, timeouts, etc., in one central place.

Manage east-west and north-south traffic

With the capability of hybrid communication between microservices and API gateway, Envoy proxy helps in handling traffic within data centers (east-west traffic) and also between data centers (north-south traffic). The platform team and network team can easily manage and monitor the traffic for multi-cloud applications.

Monitor traffic and ensure optimum platform performance

Envoy delivers stats, logs, and metrics, which the platform team can useto monitor and measure traffic, security violations, and the overall health of the application. Envoy can help the team deliver peak performance for their application.

Ensure 100% security of the entire stack

Application security and platform teams can now ensure the security of their platform by easily defining authentication and authorization rules into their Envoy proxy.

Scale on demand

Envoy proxy is horizontally scalable, which means you can add as many services to a service mesh as needed, and the proxy can be added to any number of services.

Additional Resources

- Envoy 101: Configure Envoy as Gateway. An easy-to-follow introduction to setting up Envoy as a gateway.

- Get Started with Envoy in 5 Minutes. Fundamentals of Envoy proxy, its building blocks, architecture and how it works.

- Learn Envoy Fundamentals. Free course to provide all concepts on Envoy with videos, labs, and quizzes.

- Learn Istio Fundamentals. Watch five hours of free video and learn a great deal about Istio.